When people first started talking about prompt engineering, many developers dismissed it as a soft skill — something closer to creative writing than real engineering. That view has not aged well. In 2026, the ability to write effective prompts is as valuable as knowing how to write a good SQL query or structure a clean API call. It is not magic. It is craft.

In this guide, we cover everything you need to know about prompt engineering — from core principles to advanced techniques — so you can get reliable, production-ready results from any large language model.

Table of Contents

- Why Prompt Engineering Still Matters With Smarter Models

- The Core Principles of Effective Prompt Engineering

- Advanced Prompt Engineering Techniques Worth Knowing

- Common Prompt Engineering Mistakes to Avoid

- Prompt Engineering in Production Systems

- Tools and Resources for Prompt Engineers

- The Bottom Line

- Frequently Asked Questions

Why Prompt Engineering Still Matters With Smarter Models

A common assumption is that as models get smarter, prompt engineering becomes less important. The opposite has turned out to be true. Smarter models are more capable — which means the gap between a mediocre prompt and a well-crafted one produces a bigger gap in output quality. A vague instruction to GPT-4o gets you a vague answer. A precise, well-structured prompt gets you something you can actually use.

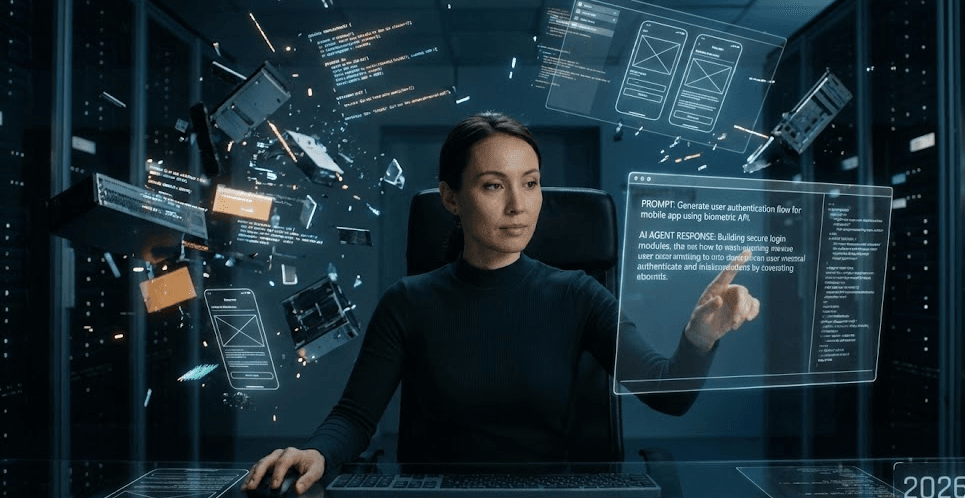

More importantly, as AI moves from chat interfaces into production systems — agents, pipelines, automated workflows — prompts become part of your codebase. A poorly designed prompt in a production system is a bug waiting to happen. Prompt engineering is now a first-class software engineering concern.

This connects directly to the rise of autonomous systems. If you want to understand how prompts power AI agents end-to-end, read our breakdown of how AI agents are changing the way we work in 2026.

The Core Principles of Effective Prompt Engineering

1. Be Specific About the Output Format

Do not just ask for information — tell the model exactly how you want it structured. Do you want a JSON object? A numbered list? A one-paragraph summary? A table? Specifying the output format eliminates ambiguity and makes post-processing far easier in automated pipelines.

❌ "Tell me about the top AI models."

✅ "List the top 5 AI language models released in 2025. For each one, provide: name, developer, context window size, and one standout feature. Return as a JSON array."2. Give the Model a Role

Assigning a persona or role to the model primes it to respond from a specific perspective with the right level of depth and tone. This is one of the most reliable prompt engineering techniques, especially for technical content and domain-specific tasks.

✅ "You are a senior backend engineer with 10 years of experience in distributed systems. Review this architecture and identify potential failure points."3. Use Chain-of-Thought for Complex Reasoning

For tasks that require multi-step reasoning — debugging, analysis, decision-making — asking the model to think step by step consistently improves output quality. It forces the model to show its work, which also makes errors far easier to catch and correct.

✅ "Analyze this Python function for bugs. Think through the logic step by step before giving your final answer."4. Provide Examples (Few-Shot Prompt Engineering)

If you need the model to match a specific style, format, or pattern, showing it 2–3 examples is far more effective than describing what you want in words. This is called few-shot prompting, and it works remarkably well for classification, formatting, tone-matching, and data extraction tasks.

Zero-shot prompting (no examples) works well for general tasks. One-shot or few-shot prompt engineering is the go-to approach when consistency and precision matter.

5. Set Constraints Explicitly

Tell the model what not to do. This sounds counterintuitive but it dramatically reduces hallucinations and off-topic responses. Constraints like “do not include information you are not confident about” or “keep the response under 200 words” give the model clear guardrails that improve reliability significantly.

Advanced Prompt Engineering Techniques Worth Knowing

ReAct Prompting: Combines reasoning and acting — the model is prompted to reason about what to do, take an action (like a tool call), observe the result, and then reason again. This is the backbone of most production AI agent systems and is essential knowledge for any developer working with agentic AI.

Self-Consistency: Run the same prompt multiple times and take the majority answer. This prompt engineering technique is particularly useful for high-stakes decisions where a single run might produce an outlier result due to model randomness.

Prompt Chaining: Break complex tasks into smaller prompts where the output of one becomes the input of the next. This gives you far more control over the process and makes debugging much easier compared to a single monolithic prompt.

Tree of Thought (ToT): An evolution of chain-of-thought prompting where the model explores multiple reasoning paths simultaneously before converging on the best answer. Particularly effective for planning and problem-solving tasks with multiple valid approaches.

Retrieval-Augmented Generation (RAG): Combine prompt engineering with external knowledge retrieval — inject relevant documents or data into the prompt context so the model reasons over fresh, domain-specific information rather than relying solely on training data.

Common Prompt Engineering Mistakes to Avoid

Even experienced developers fall into these prompt engineering traps:

- Being too vague: “Write me a summary” tells the model nothing about length, tone, audience, or format. Always specify.

- Overloading a single prompt: Asking for five different things in one prompt usually produces mediocre results on all five. Break it up.

- Ignoring temperature settings: For factual, deterministic tasks, use low temperature (0–0.3). For creative tasks, higher temperature (0.7–1.0) produces more varied, interesting outputs.

- Not iterating: Treat your first prompt like a first draft. The best prompt engineers test, refine, and version-control their prompts just like code.

- Forgetting context limits: Stuffing too much into a prompt degrades performance. Be intentional about what context is truly necessary.

Prompt Engineering in Production Systems

The stakes of prompt engineering rise significantly when prompts move from experimentation into production. Here is what changes:

Version control your prompts. Treat prompts as code. Store them in your repository, track changes, and document why each version was modified. Prompt regressions are real — a model update or a small wording change can break previously reliable behavior.

Build evaluation pipelines. You cannot improve what you cannot measure. Define what a good output looks like for your use case and build automated evals that score your prompts against real examples. This is how professional AI teams approach prompt engineering at scale.

Handle failures gracefully. Production prompts need fallback strategies. What happens when the model returns malformed output? Build validation layers that catch unexpected outputs before they propagate downstream.

According to OpenAI’s published research, structured prompting techniques consistently outperform unstructured approaches across a wide range of tasks — from code generation to complex reasoning benchmarks.

Tools and Resources for Prompt Engineering

The prompt engineering tooling ecosystem has matured rapidly. Here are the most useful tools in 2026:

- LangSmith: Prompt versioning, testing, and evaluation platform from the LangChain team. Excellent for production prompt management.

- PromptLayer: Logging and analytics for LLM calls — great for understanding how your prompts perform in production.

- OpenAI Playground / Anthropic Console: Best starting points for rapid prompt iteration and testing across different models.

- Weights & Biases Prompts: Integrates prompt tracking into existing ML experiment workflows.

- LMQL: A query language for LLMs that gives developers fine-grained control over prompt structure and output constraints.

For a deeper understanding of the AI landscape these tools operate within, read our guide on the future of artificial intelligence.

The Bottom Line on Prompt Engineering

Prompt engineering is not about finding magic words that unlock hidden model capabilities. It is about clear communication — the same skill that makes a developer good at writing documentation, naming variables, or specifying requirements. The developers who treat prompts as first-class code artifacts, iterate on them systematically, and test them rigorously are the ones getting the most out of AI in 2026.

Start with the basics: be specific, give context, define your output format. Then layer in the advanced prompt engineering techniques as your use cases demand it. The investment pays off fast — and the compounding returns over time are significant.

Frequently Asked Questions About Prompt Engineering

What is prompt engineering?

Prompt engineering is the practice of designing and refining input instructions given to large language models (LLMs) to consistently produce accurate, useful, and reliable outputs. It involves techniques like role assignment, output format specification, chain-of-thought reasoning, and few-shot examples to guide model behavior.

Is prompt engineering a real job?

Yes. Prompt engineering roles exist at AI labs, enterprise software companies, and startups building AI-powered products. The role has evolved from a standalone position into a cross-functional skill that software engineers, data scientists, and product managers are all expected to develop as AI becomes embedded in more workflows.

What is the difference between zero-shot and few-shot prompt engineering?

Zero-shot prompting gives the model a task with no examples — relying entirely on its training. Few-shot prompting includes 2–5 examples of the desired input-output pattern before the actual task. Few-shot prompt engineering typically produces more consistent and accurate results for specialized or format-sensitive tasks.

Does prompt engineering work the same across all AI models?

Not exactly. While core principles apply broadly, each model responds differently to specific prompt structures, role assignments, and formatting conventions. GPT-4o, Claude, Gemini, and open-source models like Llama all have their own behavioral tendencies. Good prompt engineers test across models and adapt their approach accordingly.

How do I get better at prompt engineering?

The fastest way to improve at prompt engineering is deliberate practice with real tasks. Start by applying the five core principles in this guide to problems you are already solving. Build a personal library of prompts that work well, document what makes them effective, and iterate. Reading published prompt engineering research and studying examples from the AI community also accelerates progress significantly.